Notes: This example works very well – and the complete source code is available at this site. https://www.sourcecodester.com/tutorials/javascript/11059/real-time-geographical-data-visualization-nodejs-socketio-and-leaflet

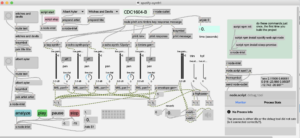

MBTA API in Max

Sonification of Mass Ave buses, from Nubian to Harvard.

Updated for Max8 and Catalina

This patch requests data from MBTA API to get the current location of buses – using the Max js object. Latitude and Longitude data is mapped to oscillator pitch. Data is polled every 10 seconds, but it seems like the results might be more interesting to poll at a slower rate, because the updates don’t seem that frequent. And buses tend to stop a lot.

Original project link from 2014: https://reactivemusic.net/?p=17524

MBTA developer website: https://www.mbta.com/developers

This project uses version 3 of the API. There are quality issues with the realtime data. For example, there are bus stops not associated with the route. The direction_id and stop_sequence data from the buses is often wrong. Also, buses that are not in service are not removed from the vehicle list or indicated as such.

The patch uses a [multislider] object to graph the position of the buses along the route – but due to the data problems described above, the positions don’t always reflect the current latitude/longitude coordinates or the bus stop name.

download

https://github.com/tkzic/internet-sensors

folder: mbta

patches:

- mbta.maxpat

- mbta.js

- poly-oscillator.maxpat

authentication

You will need to replace the API key in the message object at the top of the patch with your own key. Or you can probably just remove it. The key distributed with the patch is fake. You can request your own developer API key from MBTA. It’s free.

instructions

- Open mbta.maxpat

- Open the Max console window so you can see what’s happening with the data

- click on the yellow [getstops] message to get the current bus stop data

- Toggle the metro (at the top of the patch) to start polling

- Turn on the audio (click speaker icon) and turn up the gain

Note: there will be more buses running during rush hours in Boston. Try experimenting with the polling rate and ramp length in the poly-oscillator patch. Also, you can experiment with the pitch range.

Soundcloud API in Max8

This post describes an updated project.

The earlier version can be found here https://reactivemusic.net/?p=5413

This project is part of the internet-sensors project: https://reactivemusic.net/?p=5859

Note

2024/01/21: not working:

Soundcloud deleted my API credentials and is not accepting new requests. So there is really no point to using this project unless you have active credentials.

console.error() function crashes node. -fixed locally

Overview

In this patch, Max uses the Soundcloud API to search tracks and then select a result to download and play. It uses the node.js soundcloud-api-client https://github.com/iammordaty/soundcloud-api-client

For information on the soundcloud API http://developers.soundcloud.com/docs/api/reference

download

https://github.com/tkzic/internet-sensors

folder: soundcloud

files

main Max patch

- sc.maxpat

node.js files

- scnode.js

authorization

- The Soundcloud client-id is embedded in scnode.js – you will need to edit this file to replace the worthless client-id with your own. To get a client ID you will first need a Soundcloud account. Then register an app at: http://soundcloud.com/you/apps

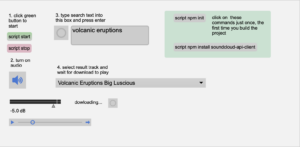

first time instructions

- Open the Max patch: sc.maxpat

- In the green panel, click on [script npm init]

- In the green panel , click on [script install soundcloud-api-client]

instructions

- Open the Max patch sc.maxpat

- open the max.console window so you can see the API data

- click [script start]

- click the speaker icon to start audio

- type something into the search box and press <enter> or click the button to the left to search for what is already in the box.

- select a track from the result menu, wait for it to start playing

Spotify segment analysis player in Max

Echo Nest API audio analysis data is now provided by Spotify. This project is part of the internet-sensors project: https://reactivemusic.net/?p=5859

There is an older version here using the discontinued Echo Nest API: https://reactivemusic.net/?p=6296

Note: Last tested 2024/01/21

The original analyzer document by Tristan Jehan can be found here (for the time being): https://web.archive.org/web/20160528174915/http://developer.echonest.com/docs/v4/_static/AnalyzeDocumentation.pdf

This implementation uses node.js for Max instead of Ruby to access the API. You will need set up a developer account with Spotify and request API credentials. See below.

Other than that, the synthesis code in Max has not changed. Some of the following background information and video is from the original version. ..

What if you used that data to reconstruct music by driving a sequencer in Max? The analysis is a series of time based quanta called segments. Each segment provides information about timing, timbre, and pitch – roughly corresponding to rhythm, harmony, and melody.

spotify-synth1.maxpat

download

https://github.com/tkzic/internet-sensors

folder: spotify2

files

main Max patch

- spotify-synth1.maxpat

abstractions and other files

- polyvoice-sine.maxpat

- polyvoice2.maxpat

node.js code

- spot1.js

node folders and infrastructure

- /node_modules

- package-lock.json

- package.json

dependencies:

- You will need to install node.js

- the node package manager will do the rest – see below.

Note: Your best bet is to just download the repository, leave everything in place, and run it from the existing folder

authentication

You will need to sign up for a developer account at Spotify and get an API key. https://developer.spotify.com/documentation/general/guides/authorization-guide/

Edit spot1.js replacing the cliendID and clientSecret with your spotify credentials

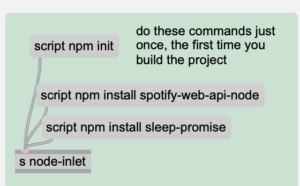

node for max install instructions (first time only)

- Open the Max patch: spotify-synth1.maxpat

- Scroll the patch over to the far right side until you see this green panel:

- Click the [script npm init] message – this initializes the node infrastructure in the current folder

- Then click each of the 2 script npm install messages – this installs the necessary libraries

Instructions

- Open the Max patch: spotify-synth1.maxpat

- Click the green [script start] message

- Click the Speaker icon to start audio

- Click the first dot in the preset object to set the mixer settings to something reasonable

- open the Max Console window so you can see the Spotify API data

- From the 2 menus at the top of the screen select an Artist and Title that match, for example: Albert Ayler and “Witches and Devils”

- Click the [analyze] button – the console window should fill with interest data about your selection.

- Click [play]

- Note: if you hear a lot of clicks and pops, reduce the audio sample rate to 44.1 KHz.

Alternative search method:

Enter an Artist and Song title for analysis, in the text boxes. Then press the buttons for title and artist. Then press the /analyze button. If it works you will get prompts from the terminal window, the Max window, and you should see the time in seconds in upper right corner of the patch.

troubleshooting

If there are problems with the analysis, its most likely due to one of the following:

- artist or title spelled incorrectly

- song is not available

- song is too long

- API is busy

Mixer controls

The Mixer channels from Left to right are:

- bass

- synth (left)

- synth (right)

- random octave synth

- timbre synth

- master volume

- gain trim

- HPF cutoff frequency

programming notes

Best results happen with slow abstract material, like the Miles (Wayne Shorter) piece above. The bass is not really happening. Lines all sound pretty much the same. I’m thinking it might be possible to derive a bass line from the pitch data by doing a chordal analysis of the analysis.

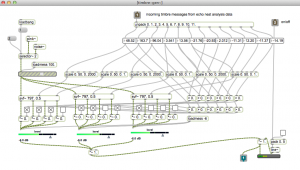

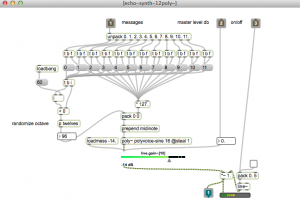

Here are screenshots of the Max sub-patches (the main screen is in the video above)

Timbre (percussion synth) – plays filtered noise:

Random octave synth:

Here’s a Coltrane piece, using roughly the same configuration but with sine oscillators for everything:

There are issues with clicks on the envelopes and the patch is kind of a mess but it plays!

Several modules respond to the API data:

- tone synthesiszer (pitch data)

- harmonic (random octave) synthesizer (pitch data)

- filtered noise (timbre data)

- bass synthesizer (key and mode data)

- envelope generator (loudness data)

Since the key/mode data is global for the track, bass notes are probable guesses. This method doesn’t work for material with strong root motion or a variety of harmonic content. It’s essentially the same approach I use when asked to play bass at an open mic night.

additional notes

Now that this project is running again. I plan to write additional synthesizers that follow more of the spirit of the data. For example, distinguishing strong pitches from noise.

Also would like to make use of the [section] data as well as the rhythmic analysis. There is an amazing amount of potential here.

Gqrx SDR with Max Mac OS

More notes: 2024/12/15:

It looks like you can pipe a stereo UDP audio stream out of GQRX. See this note: (from https://github.com/gqrx-sdr/gqrx/issues/868#issuecomment-727695048

As a basis, maybe a 48ksps complex IQ output is a good idea.

This already exists. Select “Raw I/Q” mode in the Receiver Options panel, widen the filter, then click the “…” button in the lower right corner of the Audio panel and enable the Stereo option in the Network tab.

Notes gqrx – piping I/Q audio stream into Max

Update: Looks like we need to widen the filter, as described above. And consider using udp streamed audio as an alternative. But its now working well with GQRX 2.17.6 on m3 mac sequoia.

Will need to find out if its possible for Max to read UDP audio streams, and if its possible to increase the sample rate beyond 48k

Also we can build a scaled down sdr in max and send the freq data via the sadam tcp libraries.

https://github.com/csete/gqrx Information here on adding hardware drivers.

Install gqrx with macports:

sudo port install gqrx

Install gtelnet with macports (Mac OS has jettisoned telnet)

sudo port install inetutils

Here’s a list of telnet commands that work with gqrx: https://gqrx.dk/doc/remote-control

For some reason gqrx not accepting ivp4 addresses. Need to telnet the frequency commands using this:

gtelnet ::ffff:127.0.0.1 7356

actually this netcat command works too. Make sure to use double quotes:

echo “F 7015000” | nc -w 1 ::ffff:127.0.0.1 7356

update 2024/12/14: this simpler command works with current gqrx

echo 'F 7033000' | nc -w 1 127.0.0.1 7356

In gqrx, select the I/Q demodulator and set the audio output to blackhole 2ch

For some reason, you can’t set the audio output sr to anything other than 48 KHz. This is apparently a feature. So the I/Q output bandwidth is limited, therefore no Wide band FM.

Unfortunately UDP audio streaming is limited to 1 channel, so no chance of I/Q streaming: https://gqrx.dk/doc/streaming-audio-over-udp

Building SoapySDR and CubicSDR with Linux

How to build SoapySDR and CubicSDR from source in Ubuntu 20.04

After unsuccessful attempts to compile SoapySDR and CubicSDR on Mac OS and Windows, I was able to get it running in Ubuntu 20.04. The whole process took about 2 hours but could be done in less time if you know what you are doing.

It wasn’t really necessary to install CubicSDR to test SoapySDR. But CubicSDR is the only SoapySDR app that consistently works when it comes to devices, I/Q, files and TCP frequency control.

After completing this install I was also able to compile and run the SoapySDR example code here: https://github.com/pothosware/SoapySDR/wiki/Cpp_API_Example

Build

There are excellent instructions at the CubicSDR wiki on github: https://github.com/cjcliffe/CubicSDR/wiki/Build-Linux

I also added the rtlsdr driver and the airspyhf driver. Instructions for rtlsdr are on the wiki. Instructions for airspyhf are below. You can add these drivers/libraries at any time, after SoapySDR is installed.

There were several missing libraries – described here.

hamlib

Hamlib is an option in CubicSDR. It was included because we’re using it to send frequency data to the devices via rigctld. You’ll need to install hamlib before you try to compile CubicSDR

Instructions and source code here: https://github.com/Hamlib/Hamlib

Instructions are somewhat vague. Here’s what I did.

Install libtool:

sudo apt install libtool

Clone the repository, build, and install:

git clone https://github.com/Hamlib/Hamlib.git cd Hamlib ./bootstrap ./configure make sudo make install

airspyhf

airspyhf library code from Airspy. Instructions here: https://github.com/airspy/airspyhf

This is what I did, if I can remember correctly. You may need to install libusb, but if you have done all the stuff above you probably already have it.

git clone https://github.com/airspy/airspyhf cd airspyhf mkdir build cd build cmake ../ -DINSTALL_UDEV_RULES=ON< make sudo make install sudo ldconfig

SoapyAirspyHF

Now you can add the Soapy AirspyHF drivers. Instructions here: https://github.com/pothosware/SoapyAirspyHF/wiki

git clone https://github.com/pothosware/SoapyAirspyHF.git cd SoapyAirspyHF mkdir build cd build cmake .. make sudo make install