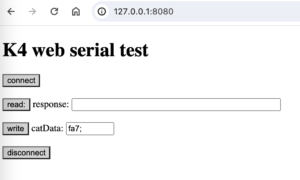

Notes for updating from Max6 to Max8 in Mac OS Catalina

In general, 32 bit code will not work

Link to internetsensors project: https://reactivemusic.net/?p=5859

Github: https://github.com/tkzic/internet-sensors

1. mxj object

Need to update, but the Oracle link leads to a dead end message. Go to the Oracle download link https://www.oracle.com/java/technologies/javase/javase-jdk8-downloads.html but instead of pressing the green download button, <ctrl> click and save the link as described in the instructions from intrepidOlivia in this link https://gist.github.com/wavezhang/ba8425f24a968ec9b2a8619d7c2d86a6

2. aka.objects

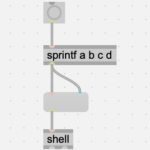

I have used aka.shell, and aka.speech – among others. These objects no longer work. Replace with Jeremy Bernstein’s shell object: https://github.com/jeremybernstein/shell/releases/tag/1.0b2

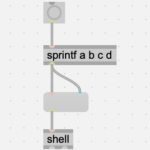

NOTE: There’s a problem with [shell] – it rejects input that is converted to a symbol using [tosymbol].

This can be fixed by using from symbol – or just eliminating [tosymbol] – it make affect the stderr-stdout redirection token, ie., “>” and other special characters but for now [shell] does not accept symbol input

aka.speech can be replaced using the “say” command in the shell. more details to follow about voice parameters.

‘say’ has similar params to aka.speech, eg., voice name and rate. There are voices for specific languages. This feature could be used, for example, to match the language from a Tweet to an appropriate voice

3. Twitter streaming API

I revised the php code for the Twitter streaming project, to use the coordinates of a corner of the city polygon bounding box. That seems to be more reliable than the geo coordinates which are absent from most Tweets.

There is a new API in the works – but its difficult to decipher the Twitter API docs because they have so many products and the documentation is obtuse.

Also it would be interesting to extract the “language” field and use it to select which voice to use in the speech synthesizer. Or even have an english translation option.

4. Echonest API

Echonest was absorbed into Spotify. The API is gone. But the Spotify API does have some of the feature detection and analysis code. But it doesn’t allow you to submit your own audio clips. There are also some efforts to preserve some of the Echonest stuff like the blog by Paul Lamere, and the remix code. Here are a few links I found to get started.

Spotify API (features) https://developer.spotify.com/console/get-audio-features-track/?id=06AKEBrKUckW0KREUWRnvT

Echonest blog: https://blog.echonest.com/

Amen – algorithmic remix project: https://github.com/algorithmic-music-exploration/amen

5. Google speech to text

Several issues:

- Replacing [aka.shell] with [shell] – instead of using [tosymbol], this workaround seems to help

- Now have rewritten all of the recording code, and shell interactions with Google.

- Still need to work on voice options for the ‘say’ command (text to speech)

- pandorabots API problems turned out that the URL needed to be https instead of http

6. twitter curl project

Looks like xively.com is gone. Maybe purchased by google? Anyway – this project is toast

7. Twitter via Ruby

Got this working again.

8. Bird calls from xeno-canto.com

This patch has been completely re-written. The old API was obsolete. This version uses [dict] and [maxurl] to format and execute the initial query. Then it uses [jit.uldl] to download the mp3 file with the bird-call audio. Interesting that [maxurl] would not download the file using the “download” URL. It only worked with a URL containing the actual file name.

9. ping

Needed to reinstall ruby gems using xcrun (see above)

seems to be a problem with mashape:

Could not resolve host: igor-zachetly-ping-uin.p.mashape.com (Patron::HostResolutionError)

[mashape was acquired by rapidapi.com – so will need to refactor the code in the ruby server.]