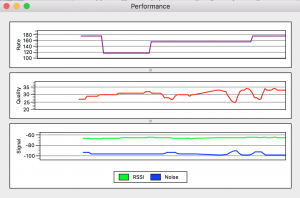

Mac OS Wifi Diagnostics

“Wireless Diagnostics” is a builtin signal monitoring app.

Instructions:

- Press the option key and click on the Wifi icon in the task bar.

- Select “open wireless diagnostics.”

- Diagnostic screens are accessed from the Window menu.

More information from Apple: https://support.apple.com/en-us/HT202663

RF noise reduction

Notes

- PA0SIM DSP noise reduction: http://www.pa0sim.nl/Software.htm

- Roger Rehr, W3SZ – various methods: http://www.nitehawk.com/w3sz/w3szdspnew.htm

- Cedar Audio: http://www.cedaraudio.com/

- Noise reduction plugins: http://www.sonicscoop.com/2013/05/30/the-best-noise-reduction-plugins-on-the-market/

- SOS Noise reduction tools and techniques: http://www.soundonsound.com/sos/jan12/articles/noise-reduction.htm

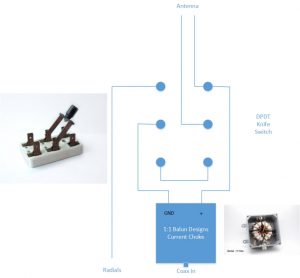

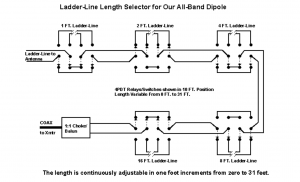

Switched tee/doublet antenna

Ladder-line antenna tuner

Impedance matching by adding/subtracting open wire transmission line

A simple antenna tuner made from switches. How to determine the “best” transmission line lengths for multi-band center fed wire dipoles.

by Cecil Moore, W5DXP

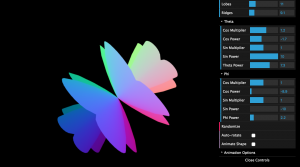

Superformula

Formula generator

“The superformula is a generalization of the superellipse and was first proposed by Johan Gielis in 2003″

from Wikipedia

http://mysterydate.github.io/superFormulaGenerator/

Some superformula samples

Shadows

by tomthefnkid

TensorFlow

Google’s Open source “deep learning” engine.

By Cade Metz at wired.com

http://www.wired.com/2015/11/google-open-sources-its-artificial-intelligence-engine/?mbid=social_fb

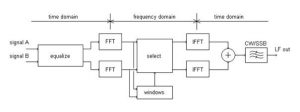

PiTX

Transmitting FM, AM, SSB, SSTV and FSQ with a Raspberry-PI

By @F5OEOEvariste (article at: rtl-sdr.com)

http://www.rtl-sdr.com/transmitting-fm-am-ssb-sstv-and-fsq-with-just-a-raspberry-pi/

MTA.me

“Turns the New York subway system into an interactive string instrument.”

by chenalexander.com